Transformative Opportunities in A.I.

Artificial Intelligence is a major transformational shift in technical capability that will bring significant change to the digital product landscape, by making possible, an entirely new class of solutions. I anticipate this will create a broad and enduring wave of innovation that dominates the digital product landscape for the next generation, similar to what the Internet has enabled since the 1990s, through disruption of incumbent services and distribution models. I’m writing this to capture my thoughts about what AI is, how it works at a high-level, why I anticipate it will be so transformative, and what the major use cases are that AI will impact.

What Is Artificial Intelligence

To begin, I need to define what Artificial Intelligence is. When I use this term, I’m referring to the applied use of foundational technologies such as Machine Learning and Deep Learning, which are computational methods built upon statistical modeling that leverage large sets of data. Put another way, you can take data sets, build statistical models that are able to identify patterns in that data and thus predict future patterns. This is essentially what is happening behind the scenes with Natural Language Processing (NLP) or image recognition which can identify and tag objects in a photograph.

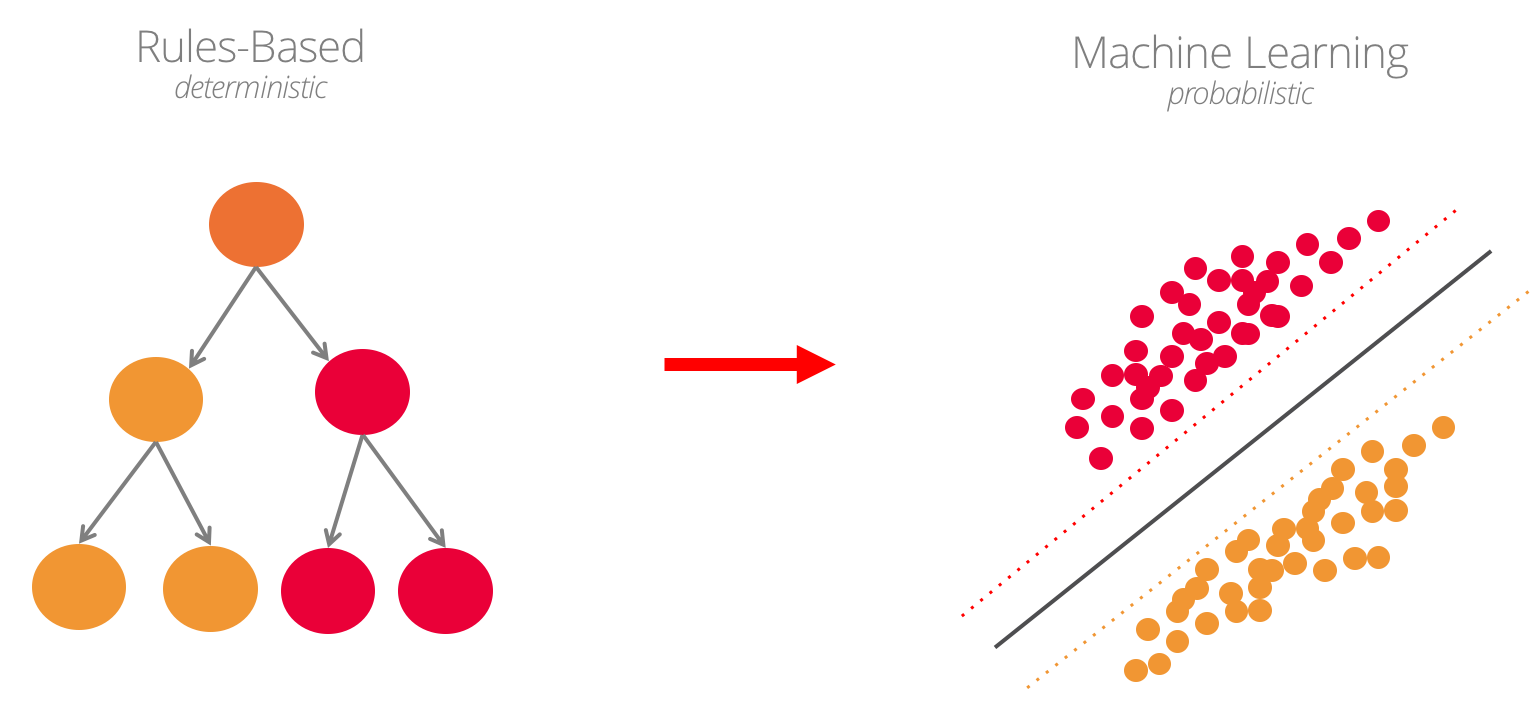

This is fundamentally different from traditional programming which is rules-based. Rules-based systems work great for certain types of systems but they require people to explicitly define every relevant scenario in advance, in order for them to work properly – and that limits the types of problems that can reasonably be solved in this way. Machine Learning turns that approach on its head – instead of defining every possible scenario, you instead feed a lot of data into a predictive model and allow it to begin generalizing patterns – which enables new solutions such as being able to identify objects in unlimited scenarios or understand the spoken word, independent of inflection, background noise, and accent.

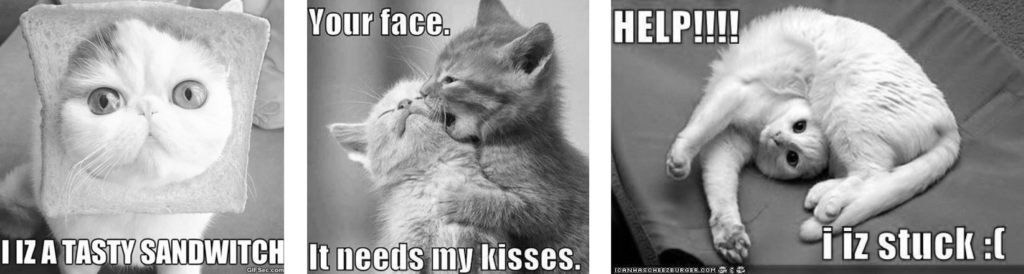

Consider the following examples if you wanted to recognize cats in images. In the first image, the cats’ ears are blocked by a piece of bread. In the second there are two cats, 3 eyes, and 3 ears. In the last photo, the ears are blocked and the head is in the wrong location. There is no possible way to write rules to account for every possible scenario – but a predictive model could reasonably classify an image with a level of confidence and tell you that its probably a cat.

And this is similar to how you’d teach a young child, by showing them a large set of examples until they eventually understand. In this case though, it is merely statically probability modeling, applied to use cases that we think of as traditionally being the domain of humans. Those traditionally accepted barriers between what people vs machines are good at are about to radically change though, as we move away from systems limited by explicit rules definition.

Going back to the original question then – what is Artificial Intelligence? To me, that merely means the application of statistically modeling to solve uniquely human problems. That might mean perceiving objects in an image, translating spoken language, and then classifying those things into categories that imbue meaning to what was just understood. It might further mean calling an API or looking up additional data to add further meaning to take action when patterns are recognized.

So that, in a nutshell, is what Artificial Intelligence is. It is not conscious, however, nor does it have its own will – it is like a zombie that merely takes actions according to the bounds of its system. It is still just a computer making logical computations at the end of the day – they’re just a new type of abstracted, complex, data-oriented computations.

Why Now?

Machine Learning and the core statistical modeling principles have been around since the 1950s. Alan Turing defined the Turing Test as a way to determine if a machine had approximated human intelligence in fact, in the year 1950. A lot of research and investment followed for many years until the concepts were generally regarded to be non-viable and investment dried up during the 1980s (a period referred to as the AI winter).

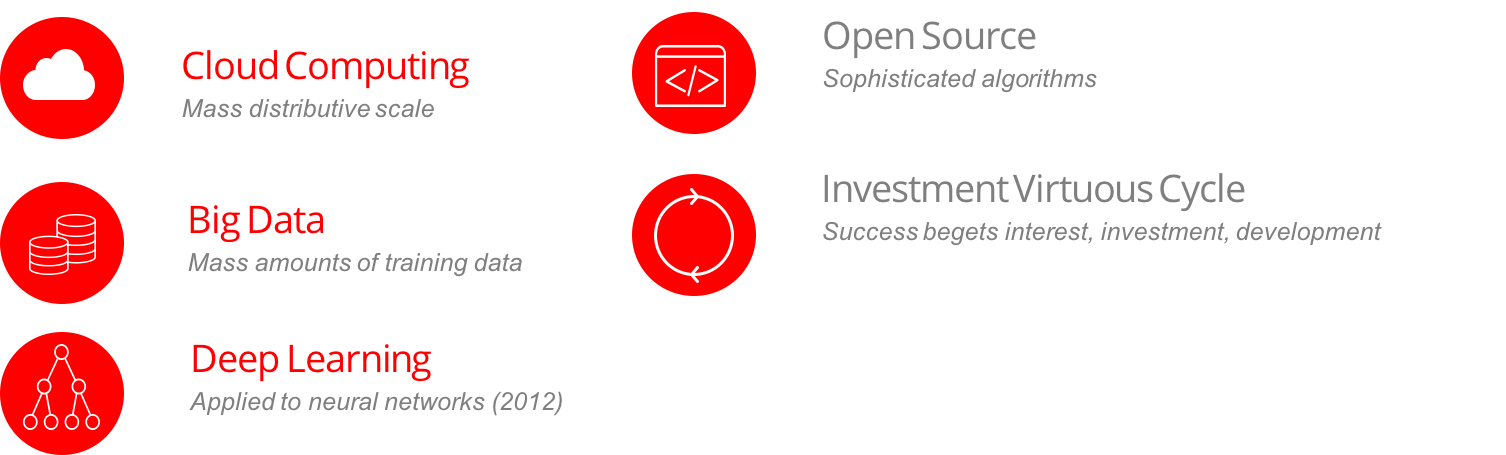

It really wasn’t until the 2010s that these theories found the practical means to really begin working. There were two practical limitations that technology was finally able to move past – the first was the massive quantities of data required to train systems to effectively recognize patterns. It wasn’t until the Big Data wave of the early 2000s that we build the capabilities to handle such quantities of data. Cloud computing and ready access to processors like GPUs that were optimized for machine learning suddenly made the computation of these large data sets viable as well. And what really tipped the scales from there was the innovation of Deep Learning which hit the scene in 2019 and rose to prominance with Google Brain, which capitalized on all of these infrastructural capabilities. That is when things really started to take off.

Because of the strong ties to academia, Machine Learning quickly began to see research tick up and soon after, industry and academics alike were releasing open source frameworks and data sets to accelerate the community’s efforts. Investments soon followed and a virtuous cycle of innovation and investment was in full since by the mid-2010s.

Adoption & Transformation

I see profound potential for innovation and disruption in the years to come – on par with or perhaps even exceeding the impact the Internet has had upon society and industry.

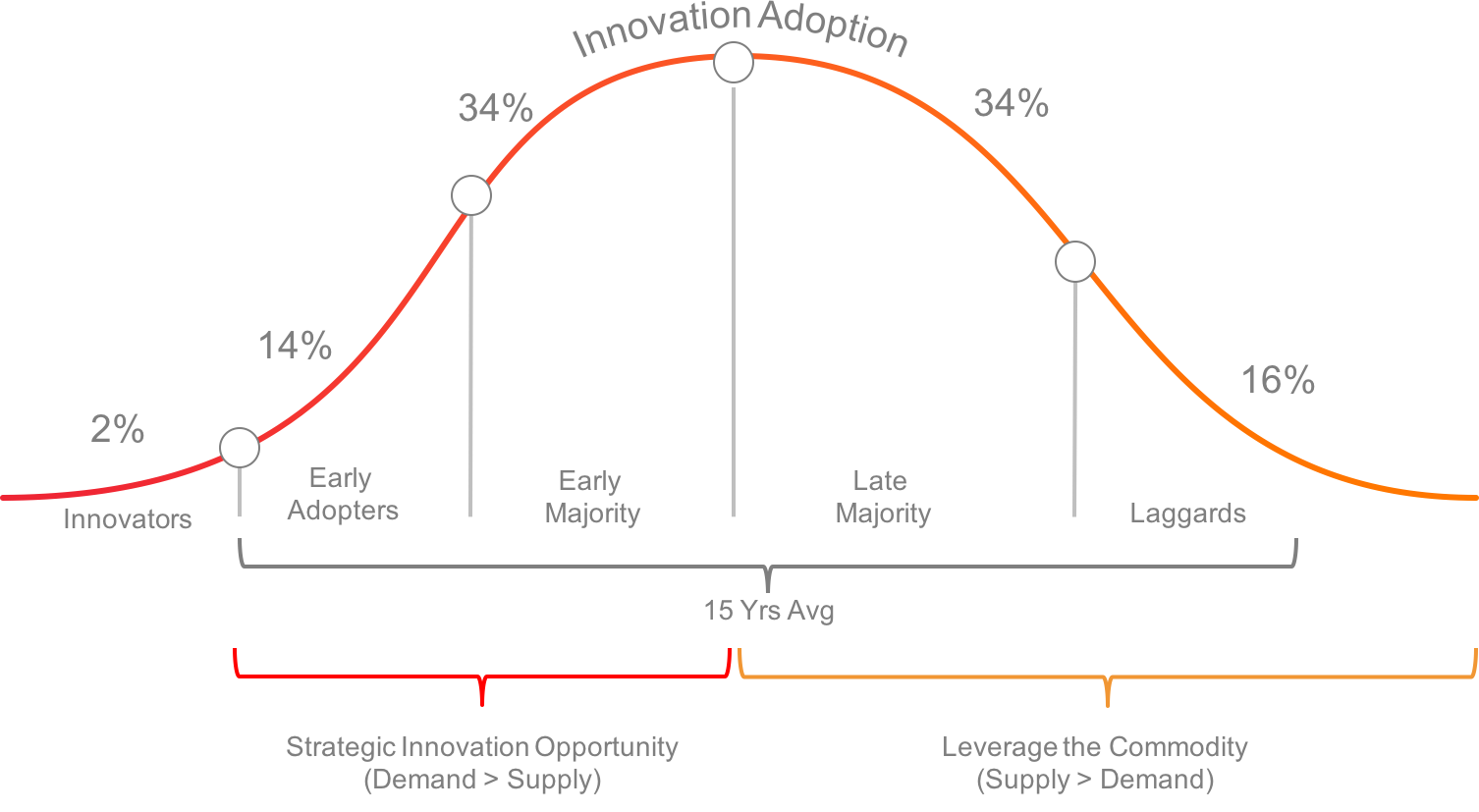

Consider Everett Rogers’s Innovation Adoption Curve as a conceptual model. Rogers mapped out the stages of adoption, beginning with innovation, continuing through early adopters, early and late majority, and finally the laggards who accept the technology once it has become ubiquitous. Applying this model to technology, I’ve casually observed that this adoption cycled lasts about 12-15 years on average and the ‘capitulation’ point where supply and demand invert is roughly midway through the curve.

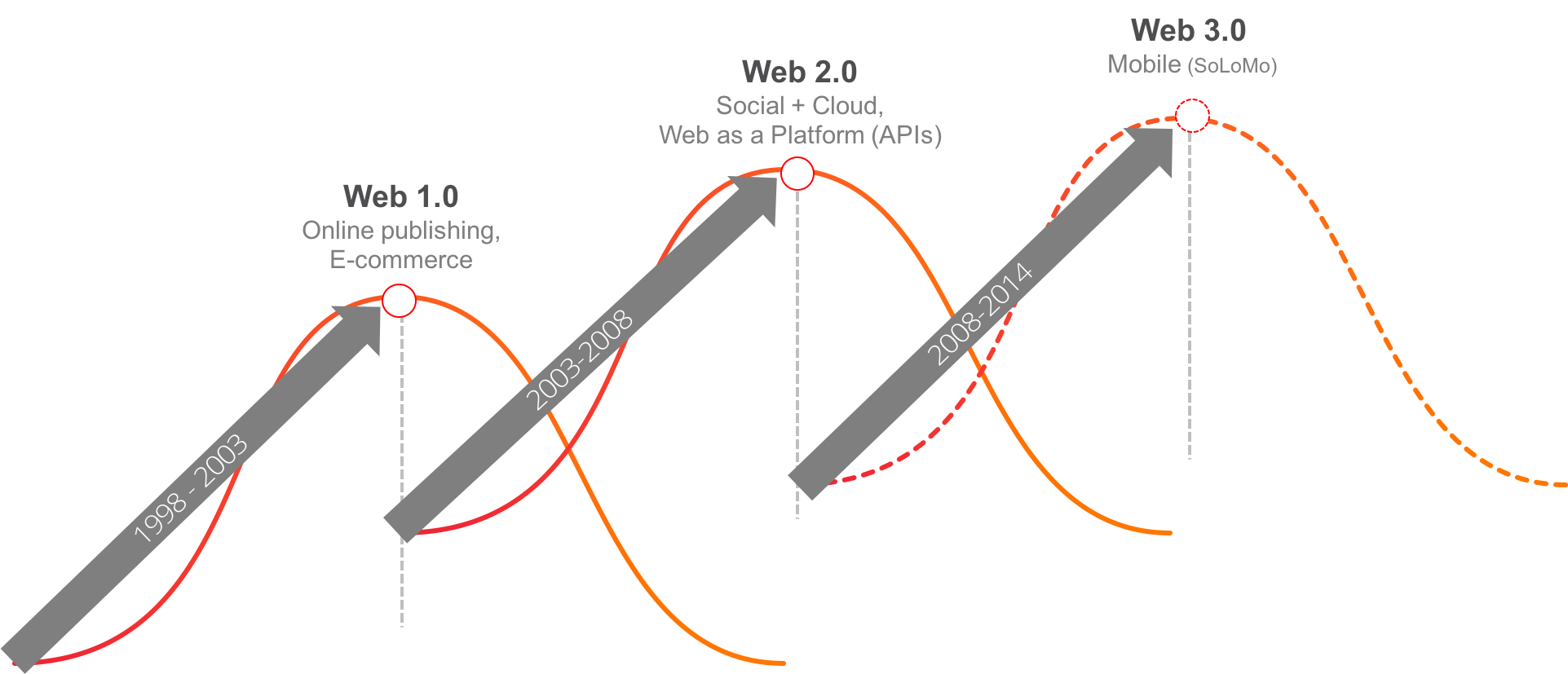

With the Internet, the first big wave began in the late 1990s and brought the initial online publishing and e-commerce giants to the stage. The next reverberating wave was “Web 2.0” embodied three things – social community interaction rather than 1-directional communication, software moving to cloud-based subscriptions (SaaS), and APIs that act as building blocks to enable more rapid application development by snapping those blocks together. Finally, by the late 2000s, we were beginning to see what I call Web 3.0 emerge, which is embodied by Mobile and the beginning together of social, mobile, and local data (aka SoLoMo). Looking at this collectively, you can see that whereas a typical innovation lifecycle is 10-12 years, the Internet was like a major earthquake that set up multiple aftershocks, which extended the full life cycle of that innovation wave for nearly 20 years!

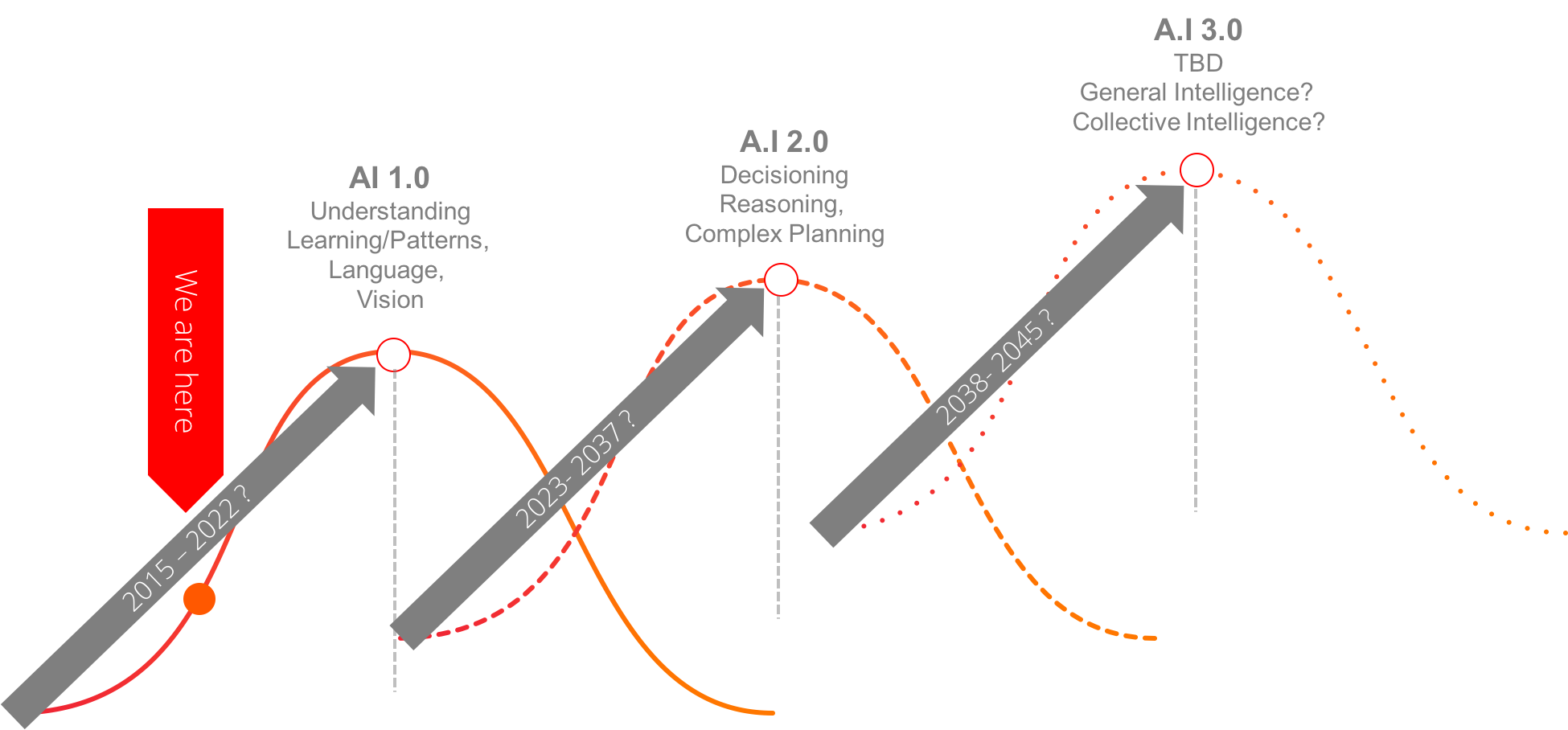

When I look at what is happening in A.I. today, I see the early stage applications that are already transformative. But they’re also the building blocks for creating much more profound innovative solutions down the road. For example, right now in e-commerce companies are mastering image recognition which makes it possible for a device to recognize shoes or a shirt. The nuance isn’t quite there yet to recognize brand, patterns or cut while passing someone on the street but companies are actively working on that. Imagine the impact to online commerce when the ability to discover and purchase that perfect shirt while just walking down the street, rather than having to search for it later, when you get home. The disruptive effect for ‘traditional e-commerce’ (how’s that for coming full circle) will be palpable for the first companies who bring this to market, providing them the ability to step in front of other retailers with the sale, before the customer even goes online to search for the item!

The second major wave I anticipate will build upon these basic pattern recognition and classification capabilities, to plan and make assistive decisions based on this understanding. Imagine, for example, a digital assistant that understands and anticipates your needs and is context-aware through sensors, sound, and optics. So let’s take this a step further with a digital assistant that knows your shopping preferences, is able to identify them as you walk down the street, identify those products it sees and save them in a curated list for you to review when you get home. So whereas AI 1.0 is about recognizing patterns, AI 2.0 will begin to extend those abilities into making assistive decisions – a move torward semi-automation.

Finally, A.I. 3.0 will be when these capabilities really start to snap together. So far these applications have been of a ‘narrow intelligence’ type, meaning they primarily focus on solving one type of problem at a time. The amazing thing about these predictive systems though, is that they never die as people do, and they can be networked and tapped into by other systems that combine and extend their capability. And so my prediction for AI 3.0 would be that we’re building intelligent aggregated solutions over the top of intelligent and capable systems of AI, just like we did in Web 3.0, by mashing up all of the SaaS APIs. By this point you have ubiquitous & networked “general” intelligence that possesses endless information, can immediately predict future outcomes, and make optimal decisions accordingly, on just about anything. Think biodevices that make it possible to think and instantly access this vast knowledge and intelligence resources as an extension of your own natural abilities.

What Comes Next

A predict a lot is going to change in the next 30 years, owing largely to capabilities of A.I. The types of problems that you can solve with Artificial Intelligence are qualitatively different than what we’ve done in the past, but the applications are endless and will touch just about every industry. From the product and entrepreneurial perspectives, this is where it gets interesting. As Product people or as entrepreneurs, the question to ask is if there is a new opporutnity to solve problems or the potential of disrupting existing solutions through trascendant innovation? Consider your industry and where there might be an opportunity to apply Artificial Intelligence.

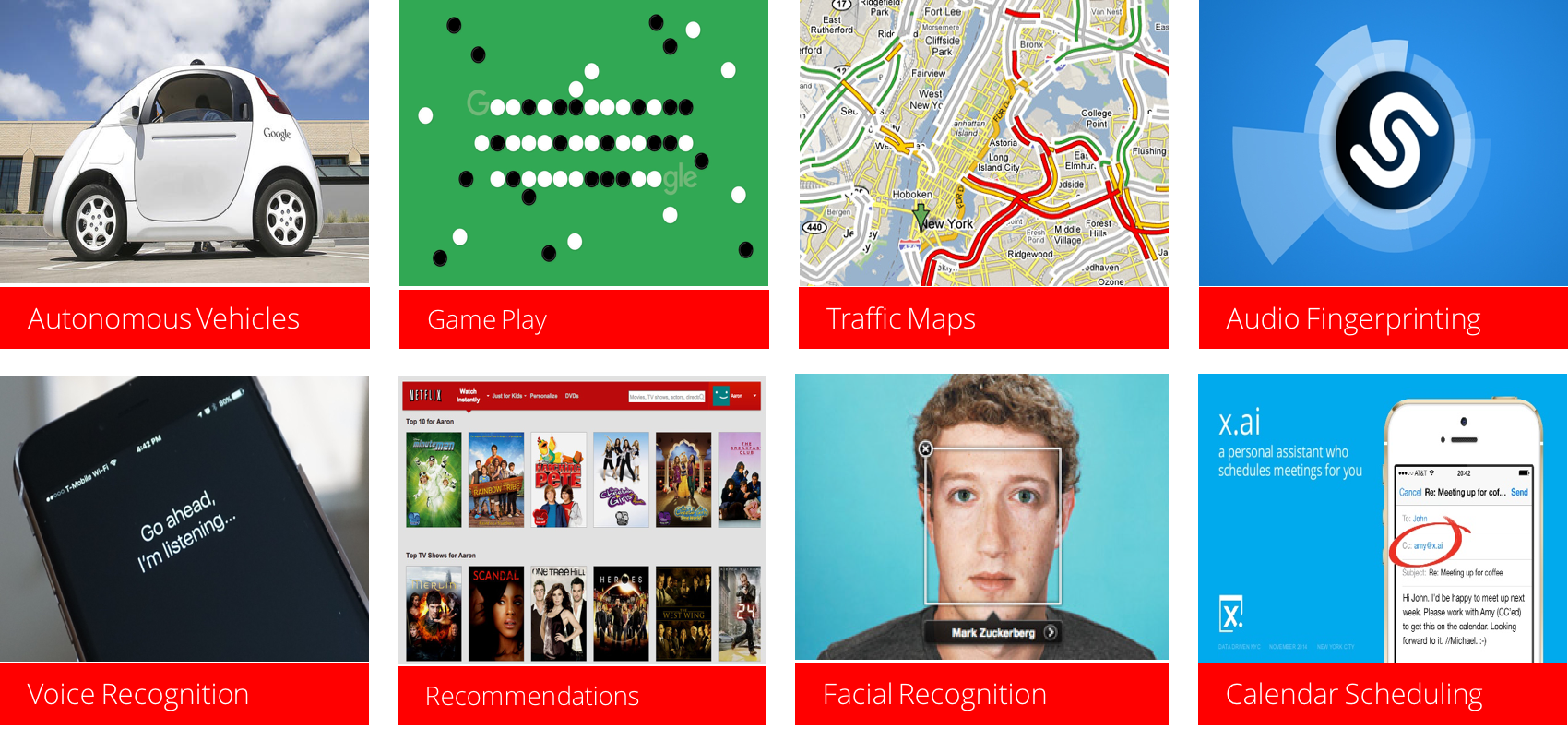

I’ll close this post by providing just a few examples to get the creative wheels turning:

- Self Driving Cars – vehicles able to sense the environment and make decisions, while following a map to the defined goal

- Traffic Maps – Maps that are able to predict the best route to get to a destination and accurately predict traffic impact due to accidents and rush hour.

- Audio Fingerprints – Ability to take a 2-second audio sample and match it to a database of every song ever recorded, to tell you the name of that song.

- Voice Recognition – Ability to understand spoken work and then use that understanding to accept commands of actions to take on your phone.

- Personalization – recommend products to buy or media to watch based on understanding the interests of similar users, to maximize the efficiency of discovery.

- Facial Recognition – the ability to look at a number of photos and then predict when that person is seen again later.

- Calendar Scheduling – Ability to negotiate the calendar for someone and schedule a meeting by speaking with them (voice recognition) and then finding a good time on the calendar that meets the requirements.

- Customer Service Chat Bots – A customer service agent that can have typed conversation with someone, understand their intent, and call APIs to find the information the person needs such as the status of their order, and take actions to cancel and refund, or provide a status update.

- E-commerce Merchandising– Observe trends on social media as well as purchase trends in the business, to determine the right quantities of inventory to programmatically order and re-order.

- AdTech – identify better ad placement opportunities that are likely to drive greater return on ad spend.